Australia/Israel Review

Anti-social media

Feb 4, 2020 | Naomi Levin

Antisemitism? There’s an app for that.

There is a good chance you haven’t spent a lot of time on the social media app TikTok.

Unless you like watching videos of young people lip-synching to pop music or dancing through a shopping centre, it probably isn’t for you.

However, its popularity is growing. The Chinese-owned video app has been downloaded more than 1.5 billion times globally, and in 2019, it was downloaded more times than Facebook or Instagram.

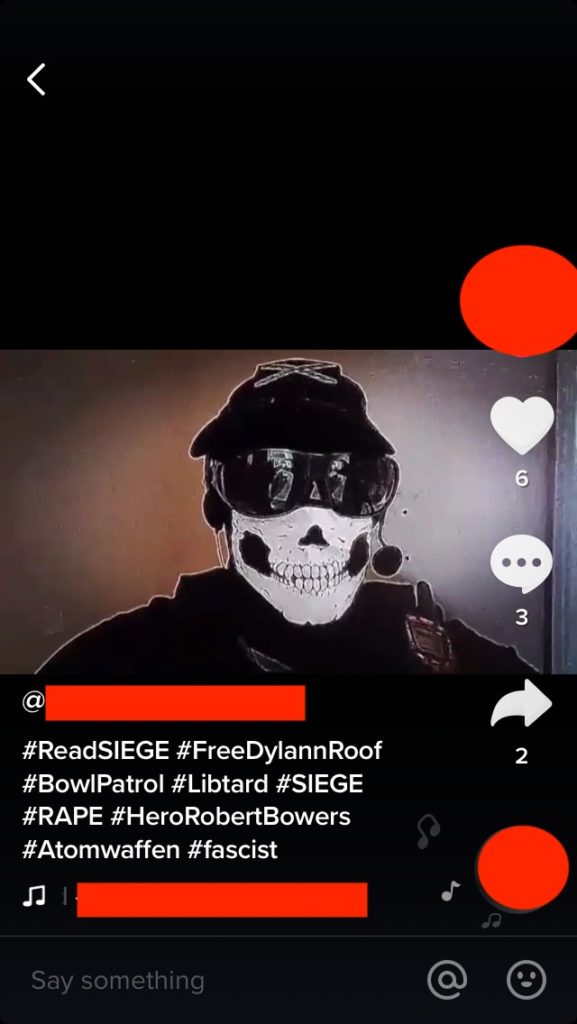

TikTok has also joined the ranks of social media apps which are hosting antisemitic content.

Research published in December by Vice discovered TikTok posts lauding neo-Nazi groups and recent perpetrators of hate crimes, individuals making Nazi salutes for the camera and accounts that featured offensive caricatures of Jewish people.

Follow up investigations by HuffPost found that controversial far-right figures, who had been banned from Facebook and Twitter for posting hateful content, were active on TikTok.

The Australia/Israel Review confirmed this and found TikTok videos featuring far-right extremists Alex Jones, Paul Joseph Watson and followers of the hate group Proud Boys, which has a presence in Australia.

TikTok is not alone in hosting antisemitic and other hateful content.

Music streaming app Spotify – 250 million users globally – was recently caught up in a similar scandal involving user-generated playlists.

While much of the music contained in these playlists was mainstream tunes, users gave the playlists obscene names like “Getting gassed with Anne Frank”, “Auschwitz train singalong” and “Kill the Jews”.

After a report in the Times of Israel highlighted this content, which was often accompanied by offensive imagery uploaded by users, the Sweden-based music streaming service announced it would remove playlists with hateful names.

Meanwhile, efforts are continuing to force Facebook, Google and Twitter to delete the accounts of individuals and bots that spread hate daily.

For young people navigating the world via social media, hate speech seems to be unavoidable. According to Australia’s Office of the E-Safety Commissioner, more than 50% of young people have seen or heard hateful comments about a cultural or religious group online.

A White Supremacist meme since removed from TikTok

Combatting hate speech on social media has proved an almost insurmountable challenge. Efforts are being made, but as fast as accounts are taken down, new ones emerge.

In response to inquiries about why it hosts hateful content, TikTok told HuffPost: “There is absolutely no place for discrimination, including hate speech, on this platform.”

And in TikTok’s defence, the Australia/Israel Review believes much of the material that is easiest to find has been removed. A search on TikTok for a range of offensive hashtags or account names associated with antisemitism brings up no results apart from the following message: “This phrase may be associated with hateful behaviour. TikTok is committed to keeping our community safe and working to prevent the spread of hate. For more information, we invite you to review our community guidelines.”

Spotify too has battled with the haters.

Spotify said it would remove the identified content reported above, adding, “The user-generated content in question violates our policy and is in the process of being removed. Spotify prohibits any user content that is offensive, abusive, defamatory, pornographic, threatening or obscene.”

Yet they clearly hadn’t got it all. The Australia/Israel Review came across playlists titled “Zionist Occupied Government” and “Killuminati: Music to conspire to anti-NWO/Rothschild Zionist”. Zionist Occupied Government (ZOG) and NWO, or New World Order, are both common antisemitic conspiracy theories.

Spotify’s policy is to remove hate content from its platform, but it seemingly relies on the public to alert the company to its presence, rather than proactively taking down such content. This is, unfortunately, the case with many social media companies.

One thing is for sure though, as quickly as the tech companies behind social media offerings remove hate content, it pops up again in different accounts, often with coded and convoluted messages designed to evade automatic filters.

Meanwhile, the “legacy” social media companies – Facebook, Twitter and Google, which also owns YouTube – are improving their approach towards halting the distribution of hateful content, but there is a very long way to go.

2019 will unfortunately be remembered as the year terrorists live-streamed themselves conducting hideous massacres. The most infamous perpetrator was Brenton Tarrant, who is charged with committing the Christchurch mosque massacre in March. Tarrant’s broadcast was emulated by the synagogue shooters in both Halle, Germany and California, USA.

Facebook removed 1.5 million copies of Tarrant’s video of the Christchurch mosque massacres. A further 4.4 million pieces of content related to the terrorist attack were removed from the platform in the following four months. In the aftermath of the Yom Kippur synagogue shooting in Halle, the Global Internet Forum to Counter Terrorism, which counts Facebook, Twitter, Microsoft and YouTube as its members, swiftly acted to remove related video that appeared on any of their platforms.

However, a recent report by the Online Hate Prevention Institute (OHPI) found that Google failed to completely remove material related to the 2019 terrorist incidents, even after comprehensive requests to do so. It also found that material from these massacres continues to circulate on other, more fringe, social media sites such as Twitch, 8Chan and Gab.

Following the Christchurch massacre, the Australian Government legislated to provide the Office of the E-Safety Commissioner with the power to issue takedown notices to tech companies that host material showing a murder or attempted murder, a terrorist act, torture, rape or kidnapping. These notices have been used a number of times since the legislation passed, according to the OHPI.

In a related process, the E-Safety Commissioner also referred the Christchurch manifesto and video to the Australian Classification Board, which subsequently banned it from distribution.

In the United States, where many of the biggest tech companies are based, Jewish representatives are continuing to push for necessary and ongoing reform.

A quick Twitter search brings up inflammatory content blaming the “Zionist occupied government” for all sorts of evils, from communism to the watering down of US gun laws. Another tweet calls for the Holocaust to “go on” with the hashtag #holohoax, and others spread rubbish about the “real death toll” from the Holocaust.

Meanwhile, Facebook and YouTube continue to broadcast the “TruNews” channel.

TruNews videos claim that the impeachment of US President Donald Trump is a “Jew coup” to install a “Jewish cabal”. Among a list of antisemitic garbage too long to mention, it also accuses the “synagogue of Satan” of decimating American culture through things like TV shows, homosexuality and hip hop music.

During January, the US Jewish community took its fight to Congress. Jonathan Greenblatt, the chief executive of the Anti-Defamation League (ADL), told a Congressional hearing that government needs to step in. While progress has been made toward removing hate speech, the companies themselves have not gone far enough.

ADL head Jonathan Greenblatt testifies on social media hate speech to the US Congress

“It’s long overdue for the social media companies to step up and shut down the neo-Nazis on their platforms,” Greenblatt told Congress. “Companies like Twitter and Facebook need to apply the same energy to protecting vulnerable users that they apply to protecting their corporate profits.”

Speaking at the same hearing, Nathan Diament from the US Orthodox Union called for artificial intelligence technology to be used to remove antisemitic and other hateful content.

Online hate is a pandora’s box where the ugliness seems endless. As quickly as one hateful post or account is deleted, a dozen seem to pop up in their place. Legislators, regulators, research and advocacy organisations and concerned individuals will have to lift their game in 2020 if the fight to limit the spread and perniciousness of online hate is to make any significant progress.

Tags: Antisemitism, Australia, Far Right, Racism, United States